Converged Credentials, or multi-application smart cards, are built on card architecture designs that perform multiple tasks across various ecosystems and domains. Their construction requires a thorough understanding of the issues facing security, utility, conflicts, and costs. This paper highlights some of the challenges that stem from competing standards, ecosystems, and stakeholders. It also covers conflicts in data mapping, card topologies, and data dictionaries.

Contents

- Introduction. 2

- Standards That Matter. 2

- Impact on Existing Infrastructures. 3

- Stakeholders and Their Respective Budgets. 4

- Card Data. 4

- Data Mapping challenges. 4

- Encoding. 6

- Card Topologies. 6

- Biometrics that Matter. 8

- Security. 8

- The Mechanisms of Data Security. 9

- Chip Card Security. 10

- PKI vs.SKI and Electronic Signatures. 10

- Visual Security. 11

- Types of Physical Security. 11

- Conclusion. 12

- Glossary. 12

- About CardLogix Corporation. 14

Introduction

Converged credential systems are a growing trend among governments and enterprises. A single credential can point to and store important data with specialized security protections for a variety of applications, including Voter ID Cards, Driver Licenses, Electronic Healthcare Records (EHR), ePassports/MRTDs, transportation passes, and more. Converged credentials increase convenience by replacing multiple credentials with one strong smart card credential that performs multiple functions. These systems lower costs by issuing fewer cards in a single enrollment process. Workflows for departments are made even more cost-effective by having all systems communicating to a single abstracted identity software layer or database.

Albeit their advantages, not all stakeholders welcome the new efficiencies, and the development of these systems can be challenging due to conflicts in data mapping, card topologies, existing infrastructures, and competing standards. Program managers have many stakeholders and technology variables to consider. When armed with the proper framework, a converged credential system is well worth the investment. The manager must have a thorough understanding of the ecosystems, standards, existing data systems and/or civil registries, stakeholders, data interoperability, and other relevant factors.

This white paper provides an overview of many of the elements involved in the development of a converged credential smart card system. The scope of this paper is limited to card-specific factors. The author assumes that roadmaps for a holistic approach to enrollments, registries and other data management issues have been charted. Fundamental specifications, such as a data dictionaries and authentication guidelines, are also assumed to be completed.

Standards That Matter

Standards are important. They help make products, data and systems more interoperable and reliable. However, when standards are implemented without being fully understood, they can add unnecessary costs and potentially impair interoperability. This situation is observed when projects under development instinctively refer to some standards in an RFP or a specification without understanding their core intention and benefit—which may or may not be applicable to that project’s specific needs or environment.

It is important to note that standards are built by committees of stakeholders and vendors that have a common goal and an investment in a particular point of view. This view may be contrary to your project’s goals. It is also important to note that the march of technology is relentless, and that the pace of updates to standards never meets that of the private sector. Standards that you should pay special attention to include:

- ICAO 9303

- This standard was built by the UN division of Civil Aviation defines MRTDs (Machine Readable Travel Documents). It includes passports, border crossing cards, and visas. It covers data structure encoding and biometrics.

- Why this is important: Widest support around the world. The 9303 specification includes particular references to ISO documents for fingerprint biometrics and identifying data.

- ISO 14443

- This international standard defines contactless integrated circuit cards used for identification and the transmission protocols for communicating with it.

- Why this is important: This document is the standard for chip and reader hardware including the NFC specification—a must-have for all contactless card programs.

- ISO 7816-4

- This international standard defines the electronic command-response data pairs exchanged at the card’s interface as well as the method of retrieval of data elements in the card with access control methods to the files and data in the chip. It also defines structures and contents of historical bytes to describe operating characteristics of the card and structures for applications and data in the card. This is seen at the interface when processing commands. It does not cover the internal implementation within the card or the outside world.

- Why this is important: All systems that utilize smart cards need to exchange data in a uniform protocol. Sections 1-4 cover this subject well.

- ISO 7810

- This international standard defines the physical characteristics for identification cards. It also contains specifications regarding physical dimensions, and resistance to bending, flame, chemicals, temperature, humidity, and toxicity. The standard includes test methods for resistance to heat.

- Why this is important: It establishes a minimum TOE (Target of Evaluation) for card testing.

- CBEFF (Common Biometric Exchange Formats Framework)

- This standard provides the ability for different biometric devices and applications to exchange biometric information between system components efficiently. In order to support biometric technologies in a common way, the CBEFF structure describes a set of necessary headers and data elements.

- Why this is important: It establishes an interoperable methodology of interpreting a set of biometric data.

- HL7

- HL7 is a set of international standards for transfer of clinical and administrative data among software applications used by various healthcare providers.

- Why this is important: It has established a methodology to code and define medical data safely and efficiently.

- EMV

- EuroPay MasterCard and Visa consortium that defines chip card payments for their member banks.

- Why this is important: It has established two distinct methods of authenticating a card at a point of sale card acceptance device. It has also established rules for moving data through payment gateways and banks to ensure security for all parties.

- Idblox

- Idblox is a vendor-driven standard that supports ICAO and scalable card data mapping structures.

- Why this is important: This group has established a common data dictionary with XML tags for identity based on prior specifications and established rules for encoding and using data across multiple devices with out-of-the-box, COTS (Commercial Off-The-Shelf) components.

- ISO/IEC 18013 part 1-3 Drivers License

- This specification defines an “ISO Compliant Driver License” (IDL) that can perform the function of both the international driver license (IDP) and the domestic driving license/permit. The standard was built around the EU Pictographic IDL.

- Why this is important: when multiple languages are a barrier, this pictographic methodology makes the identity proofing for determining driving privileges easier.

Impact on Existing Infrastructures

Standards are particularly important when merging a new credential program with an existing infrastructure. The card’s data and file structure must be pre-configured to work seamlessly with the readers and back-end systems. This fact is just as true for a physical access system, EMV banking terminal, or a border crossing station that accepts a credential.

For an accurate assessment of the impact a new program will have on an infrastructure, a manager should take inventory of all the different touch points/ card acceptance devices already in place. The cost of many smart card readers have reduced to that of a wireless mouse, so the total impact of a change to readers may not be significant.

Consider: Are the current devices and software systems working well, and should they be upgraded? For example, if a program relies on a barcode to store a biometric, what is the fallback mode in the case that it fails to read the biometrics? This can happen due to a system failure or due to a single modality, such as a fingerprint, being injured. In another scenario, is the physical access system using a non-authenticated proximity or UID number from a card for building access? A manager should consider the total cost of changing hardware and software as well as the downstream costs of a data or building breach. Compliance may also be an issue when considering if your current infrastructure meets best practices.

Rather than adapting to the older system, it may be better to replace it altogether. The total cost of implementing cards with multiple integration protocols can be more expensive and less advantageous than building an entirely new system that grows with your needs. Having a system that grows with your needs is not always possible when working within existing, proprietary physical access systems, for example. Additionally, sometimes a Card Not Present (CNP) transaction may be the best option. For example, if the card must operate as both a payment card and an ICAO-compliant card, switching to a mobile phone could be the better, lower-cost alternative to an expensive, multi-applet certified bank card.

Consequently, there are hard choices to be made regarding what can and cannot be altered in the converged credential system. This typically becomes a fiscal decision overlaid across the projections of downstream effects and adoption.

Stakeholders and Their Respective Budgets

Every organization or government faces multiple silos of independent budgets and concerns. A main concern of each department or ministry is the loss of control when the process is even partially out of their hands. For example, the production and delivery of the credentials may be handled by different departments or vendors. These are valid concerns and should be addressed by phasing in a credential over a period of time.

Costs are usually reduced by the use of a single credential and single enrollment process. Departmental workflows can be made even more efficient by having all systems communicating to a single abstracted identity software layer or database. Even so, stakeholders may reject these efficiencies due to political conflicts of interest.

Card Data

Data Mapping challenges

A chip card’s mapping or LDS (Logical Data Structure) is critical to achieving interoperability across domains. In smart card chips, data is stored in containers/files, and they are often put into logical groups based on a common security mechanism. The LDS describes how data is to be written to and formatted in the chip. The location of the stored data and keys and the enabled mechanisms to access this data need to be documented in the implementation documentation.

Most existing systems such as ICAO and EMV have already mapped the cards’ organization model. For a modified LDS, it is important to note that every file of an ISO 7816 smart card can have the following access attributes set.

- Read

- Write

- Update (2nd time write to the file)

- Invalidate

- Rehabilitate

Along with these simple attributes, a file’s contents may be encrypted. Each files’ attribute can use a different access mechanism to protect the data. This mechanism can use an authentication routine or a simple password. The extent of flexibility in the design of an LDS gives the program manager serious control over their data.

An additional point is that strong credentials include a Card Capability Container. This file behaves as a menu to outside applications. It describes the reading mechanism to which the credential must communicate.

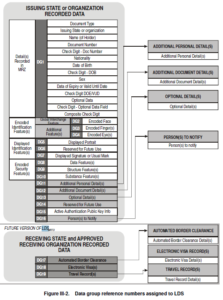

Figure 1 ICAO Data Groups as assigned to the ePassport or cards LDS

With every specification that is implemented, their LDSs are pre-defined.

The problem with merging credential functions that each must adhere to a specification is that their LDSs conflict in structure and container naming conventions. For example, an ePassport structure specifies data to be stored under one container or DF (Directory File) or an AID (Application Identifier). This specification is different from and conflicts with all other specifications and pre-defined AIDs, such as the EU Driver License, pre-defined under ISO and the EMV specification. Each AID is selected in the first step in a data transaction.

A very expensive yet viable solution is to use a smart card with abundant user memory. The same enrollment data must be written multiple times to distinct logical data structures or AIDs, each LDS complying with a unique specification. Traditionally, this is achieved with applets on a Java Card platform. While the multi-applet card meets the desired specifications, it sacrifices affordability and encoding efficiency by having to constantly re-write duplicate data to each applet in a larger and therefore more expensive card. If biometrics are involved in the program, each data element must use large areas of the chip’s available user memory to achieve interoperability. For example, an ICAO-compliant fingerprint has a size of at least 10K bytes. This problem is amplified when the data is written multiple times.

| Face Photo | Each Stored Fingerprint | Each Stored Iris | Signature |

| 15 to 25K Bytes | 10K Bytes | 7K Bytes | 6K Bytes |

| ICAO Recommended Data Element Sizes |

As you can see, it is very easy to use the limited user memory on a card with 72k bytes. Data duplication, for the sake of achieving complete compliance, is not always advisable.

Other solutions are available that utilize a single LDS for multiple applications. The way the LDS is defined depends on the use case, its needs, environment, and nature of the data involved. If the file addresses for each standard do not conflict, for example, a single LDS or card file structure (CFS) can be constructed with multiple addresses and directories. Each DF within the card file structure is configured to meet the needs of one or more applications with separate protection mechanisms and privileges. In this scenario, no compromises are made to specific specifications, and all data and details adhere. Other scenarios may require slight compromises in order to achieve a sophisticated converged credential that is largely interoperable, but may not adhere to every unique specification, perfectly. These credentials are built to meet important standards and specifications required for interoperability, and where they actually benefit an overall system.

Encoding

Aside from data mapping challenges, every project specification must describe how the data will be encoded or written to the chip. One data consideration is the determination of which file types should be used, such as the type of image file. For example, will your system use a JPEG, JPEG 200, PNG, or all of the above? The encoding method should not be confused with the loading method which specifies explicit security mechanisms for loading applets and keys.

Your encoding software must ensure a traceable and auditable chain of trust for the data and cards. These features protect your system from human-related data breeches and help strengthen your processes.

Card Topologies

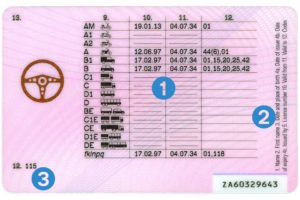

Simply stated, a card’s topology is the map of the variable data elements that are printed to the front and the back of the card. The topologies are largely dictated by standards. Portrait orientation is most commonly used for employee badges, and for all other credentials, landscape orientation is used. The ICAO standard for an ID card topology is shown in figures 2 and 3 with all of the data elements listed. Large standards use guidelines. The most important part of all of these standards is the placement and printing of any machine readable barcode or MRZ (Machine Readable Zone). The smart card chip, apparent on the front side of a contact smart card, is by design fixed in the same location on the card, but the graphics and card orientation can vary around that design element, the chip module.

The EU Driver License has migrated toward a pictographic style card due to problems of multiple languages in a small region.

Figure 4 Back of an EU Drivers Licenses

The banking industry has become more focused on consumer branding than on specific data element placement. Due to the increased use of chip cards, the banking and EMV standard has become more relaxed to allow additional space on the card for company branding. Additionally, the need for an embossed carbon swipe of a card is no longer allowed.

Biometrics that Matter

There are many biometric modalities and various matching technologies for which to authenticate subjects. ICAO lists over 20 types that can be used in optional data containers. The biometric modalities that truly matter include:

- Face (Portrait photo)

This is the most commonly used identifier in the world.

- Faceprint (Template of the face)

There is no standardized matching algorithm. Facebook has the largest deployment in the world. When considering the use of faceprints, a liveness test is needed to prevent spoofing e.g. blinking.

- Fingerprint (Template and image of the fingerprint)

Proven over time to be very reliable with reliable false acceptance and false reject rates, they are ideal for a population that is over the age of 16 and under the age of 55. The prints will change with a person’s age.

- Iris (Template and images of the iris)

Iris images are reliable throughout a lifetime for an individual beginning at the age of 6 months.

- Signature (Image of the signature)

The signature is commonly printed on the credential, but it is not very secure as a valid comparison, as this mechanism requires specific training for personnel to use effectively.

Interoperability with biometrics is a common problem in credential deployments. The problems typically arise from using proprietary vendors’ systems to save costs. In the long run, the cost of not collecting, saving and de-duplicating the data within standards is very expensive. By adhering to the ICAO, CBEFF and ANSI standards, a manager will save on hardware costs by having multiple vendors for each product deployed.

Security

The purpose of all smart cards is to keep data secure and to remain active for a variety of transactions. It is important to note that cards are only as strong as the processes that use them. When using a card in a transaction, there are two paths for credential verification:

- Physical validation of the credential by examining the card’s security features

- Logical validation of the credential by examining the card’s data and comparing it to a known state (Authentication, biometric matching, or both)

To implement a credential management system, all data networks inherently interface with the following main elements:

Hardware, including card acceptance devices, servers, HSMs, redundant mass storage devices, communication channels and lines, hardware tokens (including smart cards), SAMs, and remotely located devices (e.g. thin clients or internet appliances) serving as interfaces between users and computers.

Software, including operating systems, database management systems, development tools, card issuance software, card operating systems, and communication and security application programs.

Data, including databases containing financial information, enrollment data, AFIS and De-Duplication systems, plus all customer or end user-related information.

Personnel, to act as originators and/or users of the data. This includes professional personnel, clerical staff, administrative personnel, and computer staff.

The Mechanisms of Data Security

Working with the above elements, an effective data security system works with the following key mechanisms to answer:

- Has my data arrived intact?(Data Integrity). Data integrity ensures that data was not lost or corrupted during transmission from the sender to the receiver.

- Is the data correct, and does it come from the right person?(Authentication). Authentication mechanisms verify user or system identities so that only authorized individuals can access the data.

- Can I confirm receipt of the data’s and sender’s identity back to the sender?(Non-Repudiation)

- Can I keep this data private?(Confidentiality). Data confidentiality prevents unauthorized senders and receivers from interpreting the data. This is typically ensured by employing one or more encryption techniques to secure your data.

- Can I safely share this data if I choose?(Authorization and Delegation). You must be able to set and manage data access privileges for additional users and groups.

- Can I verify that the system is working?(Auditing and Logging). You should be able to continually monitor and troubleshoot security system functions.

- Can I actively manage the system?(Management). Your security system must include administrative management capabilities.

- Is this an officially issued card and is it unique? Without a unique serial number per credential, it is difficult to know whether or not a given card was officially issued.

- Is this card bound to this individual? Does this card belong to the authorized cardholder? A subject may identity him or herself as the owner of the card, but without a method to identify him or her against information contained on the card or in the card’s electronic storage, you cannot know for sure. Photo IDs and signatures are often used, but utilizing matching technologies with stronger biometric modalities, such as a fingerprint, is more reliable.

Best Practices for Smart Cards include:

| Mechanisms | Card Functions | Standards | Best Algorithms | |

| 1 | Data Integrity | eSignatures | FIPs 197 | HMAC or Digital Signatures |

| 2 | Authentication | Authentication | FIPs 180-4 | SHA-1 or SHA-256 |

| 3 | Non-Repudiation | eSignatures | FIPs 197 | HMAC or Digital Signatures |

| 4 | Confidentiality | Encryption | FIPs 140-2 | AES 128,192,256 |

| 5 | Authorization and Delegation | Card Data Mapping (LDS) | Market specific | No specification |

| 6 | Auditing and Logging | Transport Keys, Forensics | N/A | No specification |

| 7 | Management | Administrator Keys | N/A | No specification |

| 8 | Uniqueness | GUID, PKD Hashes | ISO/IEC 9834-8, & with IETF RFC 4122 | ASN.1 Object Identifier components (ITU-T Rec. X.667- |

| 9 | Binding | Biometric Storage | ICAO 9303 | ANSI 378, WSQ |

Chip Card Security

The semiconductor chip is the heart of a smart card system, and it is the security vault for your data. The trusted silicon, layers of software, and most importantly, the COS (Card Operating System), protect this data. When comparing card alternatives, evaluate:

- Chip architecture and layout of the silicon (Memory vs. Microprocessor-CPU)

- Maturity of the Card Operating System. Can the card vendor provide technical support for the card or must they call a 3rd party when questions arise?

- Anti-tearing transaction mechanisms in the card OS and chip

- Virtualization of the data across the chip’s Non Volatile Memory

- Effective Card Operating System security features (E.g. Global PINs, Transport Keys, BAC and PACE)

- Appropriately executed card data models (Full compliance to specifications when needed)

- Security countermeasures in hardware

- Active encryption mechanisms (Full support for on-card and card-to-device, one time encrypted sessions)

- On-Chip Attack detection

- Secure key management (No fixed key locations in memory)

PKI vs.SKI and Electronic Signatures

PKI (Public Key Infrastructure) systems are prevalent in Europe and have been deployed in a few notable civil ID programs around the world. A large PKI, multi-use credential project was implemented in the U.S. called PIV. This project harmonized different employee IDs across federal departments, including contractors. The cards store biometrics and multiple certificates for digital signatures, and they enable access to secure facilities.

After 10 years of deployment, the base cost of the credential is just under 5 USD for 10 million cards. Loading, printing, and encoding each employee’s card increase the cost to just under 50 USD per card. The inclusion of multiple certificates and revocation authority services also increase costs and complexity. Digital signatures are used in other deployments about as frequently as in the U.S. The desire to electronically sign a data package or transaction with a unique credential, then transmit the signature through any network, and lastly verify the authenticity of the sender typically drives the need for a PKI credential. By using PKI, key management complications are reduced. Interestingly, the implementation of digital signatures, a core security feature of PKI, is still under 25% after 10 years on the market. The need for a PKI credential system may have been overreaching. In general, PKI performs well for a many-to-many set of relationships where digitally signed transactions occur across a large open ecosystem (approximately 5-100 million users). SKI (Symmetric Key Infrastructure) performs well at a one-to-many set of relationships where digitally signed transactions occur by a government to its citizens or an enterprise to its members.

Today, modern SKI systems offer strong eSignatures, which are similar to digital signatures. These systems use a centralized issuer, and they are not subject to the key management problems of older systems. New SKI systems can deliver secure FIPS-approved eSignatures without exposing the keys to any computing environment by deploying vaulted & threshold key protection.

Visual Security

Understanding the value of your credential program is essential to setting the level of graphic security for your card. The most common mistake is to save incremental printing costs while leaving to chance the much higher cost of fraudulent card duplication. Things to consider when budgeting graphics include:

- Loss of customer confidence if security is breached or at least partially duplicated

- Content replacement costs: value (points, currency) or secure access data (passwords)

- Associated costs: programming, artwork, collateral, promotion

- Distribution costs: retail, mailing

- Replacement costs

CardLogix recommends establishing a high threshold of security that prevents attacks, rather than hedging on low security and hoping for good luck. For more information, see our Graphics and Security Printing Guide.

Types of Physical Security

Overt (Level 1): Overt security features, or ‘sensory’ features, are designed to easily identify a credential with little training and no tools. Examples include color-shifting inks, tactile bumps, holograms, latent images, watermarks, and visible security threads. Because they are easily recognized, these are the easiest types of features to validate.

Covert (Level 2): Covert security features are not obvious to the naked eye and require simple tools and/ or some training to authenticate a credential. Examples include UV fluorescent inks and the CardLogix Card Validator®. Another example is an intentional error. These credential features are extremely secure and hard to duplicate, as they require multiple-factor validation and skilled examination. Covert security features offer a good balance of security and ease of verification.

Forensic (Level 3): The presence of forensic security features is well-concealed, and the features cannot be normally detected without highly specialized equipment. One example is an ink tagged with an unusual and rare material that responds only to a very specific light wavelength.

Conclusion

There are many factors to consider when building a multi-functional, converged credential. From adoption to budgets, most of these issues have been observed by many mangers across the globe in some manner. The best advice this author can give is to be fully cognizant of your current systems and the standards that your cards must live within, and talk to your vendors about all the available options to meet your goals.

Glossary

| ANSI | The American National Standards Institute. ANSI is a private, non-profit organization that oversees the development of voluntary consensus standards for products, services, processes, systems, and personnel in the United States. |

| Anti-Tearing | A mechanism in a smart card that prevents damage to data if the card is prematurely removed from a reader. |

| Applet | A very small application with a corresponding data map for use in Java based smart cards forming one or a few simple functions. |

| BAC | Basic Access Control is a mechanism specified to ensure that only authorized parties can read personal information from passports via a contactless RFID chip through the use of the ICAO specified algorithms and methods. |

| CBEFF | (Common Biometric Exchange Formats Framework) was developed from 1999 to 2000 by the CBEFF Development Team (NIST) and the BioAPI Consortium. This standard provides the ability for different biometric devices and applications to exchange biometric information between system components efficiently. In order to support biometric technologies in a common way, the CBEFF structure describes a set of necessary data elements. |

| Data Dictionary | A set of information describing the contents, format, and structure of a database and the relationship between its elements. It is used to control access to and manipulation of the database. |

| Data Mapping | With regard to computing and data management, data mapping is the process of creating data element mappings between two distinct data models. For smart cards this involves the logical data structure and a corresponding external application |

| FIPS | Federal Information Processing Standards (FIPS) are publicly announced standards developed by the United States federal government for use in computer systems by non-military government agencies and government contractors. |

| Global PINs | A mechanism in a smart card that prevents access to all files unless a number /PIN is presented that matches the preset user number/PIN |

| HSM | Hardware Security Module. An HSM is a physical computing device that safeguards and manages digital keys for strong authentication |

| ICAO | International Civil Aviation Organization. ICAO is a specialized agency of the United Nations. It codifies the principles and techniques of international air navigation and fosters the planning and development of international air transport to ensure safe and orderly growth. |

| ISO | The International Organization for Standardization (ISO) is an international standard-setting body composed of representatives from various national standards organizations. |

| Java Card | Java Card refers to a software technology owned by the Oracle Corporation, that allows Java-based applications (applets) to be run securely on smart cards and similar small memory footprint devices. |

| LDS | A Logical Data Structure standardizes the placement of data within a smart card’s operating system. It also is the access control mechanism that indicates how data is protected, by which algorithms, and by which corresponding keys. The LDS is sometimes called the Card File Structure (CFS) when referring to microprocessor smart cards. |

| NFC | Near Field Communication (NFC) is a short-range wireless connectivity standard (Ecma-340, ISO/IEC 18092) that uses magnetic field induction to enable communication between devices when they’re touched together, or brought within a few centimeters of each other. This standard works within the ISO 14443 specification regarding protocols |

| PACE | PACE is the upgraded mechanism from BAC specified in ICAO 9303- 2015 edition to ensure that only authorized parties can contactlessly read personal information from passports with an RFID chip through the use of the ICAO-specified algorithms and methods with information from passports with an RFID chip |

| PKI | A Public Key Infrastructure (PKI) is a set of hardware, software, people, policies, and procedures needed to create, manage, distribute, use, store, and revoke digital certificates and manage public-key encryption. |

| SAMs | Secure Access Module. A type of smart card in a SIM format. Built with Trusted Silicon, the SAM stores keys at a Point of Sale (POS) terminal. |

| SKI | SKI is similar to PKI and used for the same purposes. In a Symmetric Key Infrastructure, the same key is used for encrypting and decrypting a message. |

| Transport Keys | A type of key incorporated into a smart card file system to secure end-user data during shipment from the factory to the receipt by the end-user. |

| UID | A User ID (UID) is a unique positive integer assigned by a manufacturer to its cards. Cards that are NFC-compliant must share a 4,7, or 10 byte UID. The UID is not as strong as a GUID that meets an IEC standard. |

| XML | Extensible Markup Language (XML) is a markup language that defines a set of rules for encoding documents in a format which is both human-readable and machine-readable. Typically, a tag is assigned to a data element. |

About CardLogix Corporation

Since 1998, CardLogix has manufactured millions of cards that have shipped to over 42 countries around the world. With expertise in card and chip technology, as well as in card operating systems, biometrics, software, development tools, and middleware, CardLogix has continuously been at the forefront of smart card technology.

The company responds to market opportunities with creative design and ISO quality manufacturing for Trusted ID and Transaction Systems.

CardLogix creates value for customers by providing easy to deploy technology that ensures that transactions needing identity are frictionless, authenticated, bound to a specific individual and 100% accurate.

16 Hughes, Irvine, California 92618, United States +1-949-380-1312

Web: cardlogix.com | idblox.com | smartcardbasics.com | smarttoolz.com